Does 2-UAcomBot respect robots.txt?

-

2-UAcomBot policy

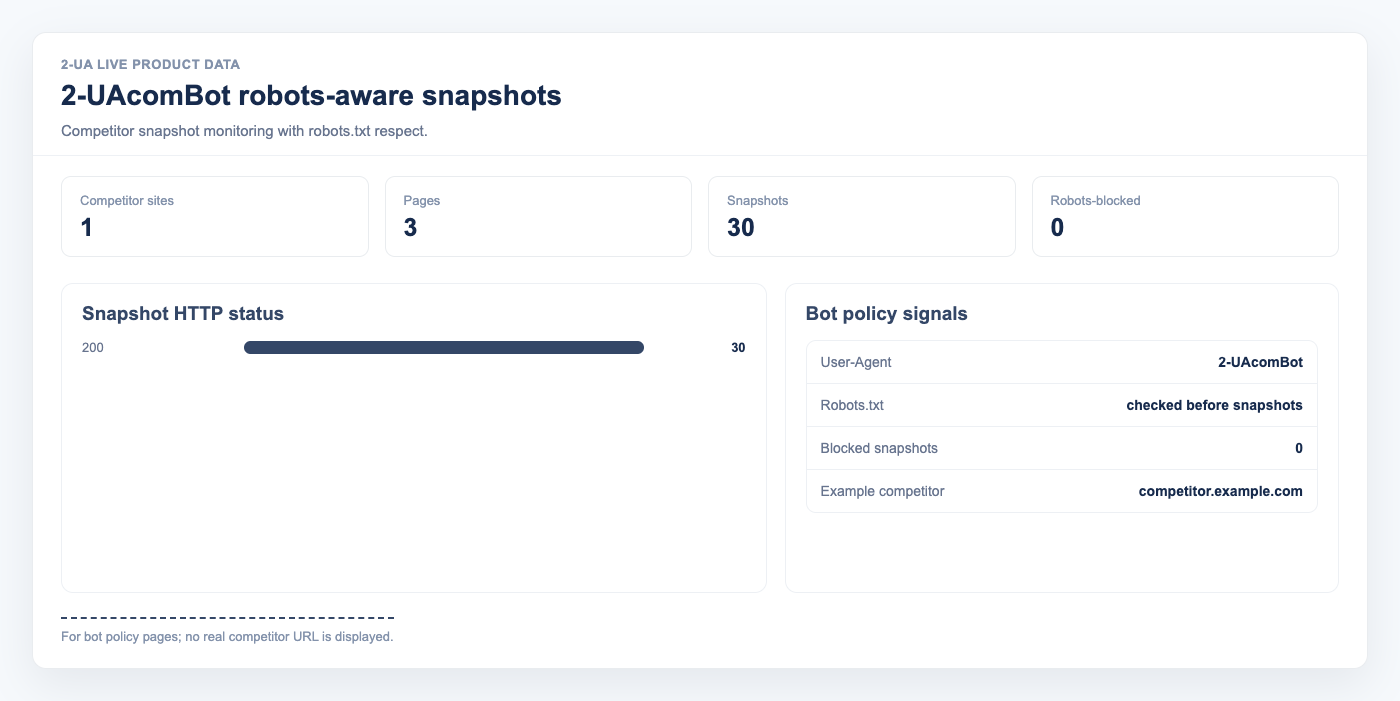

Yes. 2-UAcomBot fetches and obeys /robots.txt on every

target host before taking a snapshot. If your rules disallow the target URL for our bot, we never

fetch the page at all — the snapshot is marked blocked by robots.txt in our database and

the change-diff reports skip that URL while the block is in effect.

How do I identify the bot in my logs?

Desktop snapshot requests send this exact User-Agent:

Mozilla/5.0 (compatible; 2-UAcomBot/1.0; +https://2-ua.com/support/robots)The product token is 2-UAcomBot. We also send X-AGENT: 2-UA as a secondary signal.

How do I block 2-UAcomBot completely?

Add this to your /robots.txt:

User-agent: 2-UAcomBot

Disallow: /

We re-check your /robots.txt at most once per hour per host (and cache the result for

that hour), so your new rule takes effect on the next snapshot attempt within one hour.

What about a 5xx error on my robots.txt?

We treat a 5xx response as a temporary disallow per RFC 9309: we skip snapshots until the

next retry (around five minutes later). A 404 or 410 on /robots.txt means "no rules

published" and we proceed.

How often will I see 2-UAcomBot in my logs?

- Up to one snapshot per tracked page per configured interval (minimum six hours per page).

- Up to one

/robots.txtfetch per host per hour. - No JavaScript / headless browser traffic — only plain HTTP.

Learn more

For the full policy, including examples of allowing us on specific paths while disallowing the rest, see the article 2-UAcomBot: How We Identify Ourselves and Respect robots.txt.

If you still see requests you did not expect, email support@2-ua.com with a log excerpt and the target URL.