Google Search Console gives you real search demand: queries, clicks, impressions, CTR, and average position. A crawler gives you technical issues: broken URLs, missing titles, canonical problems, schema errors, image alt gaps, and performance regressions. Keyword rank tracking gives you a watched list of terms you care about. SEO Intelligence connects those three layers so the next action is not just "look at another report" - it is "fix this URL first, then track this keyword".

What SEO Intelligence means in 2-UA

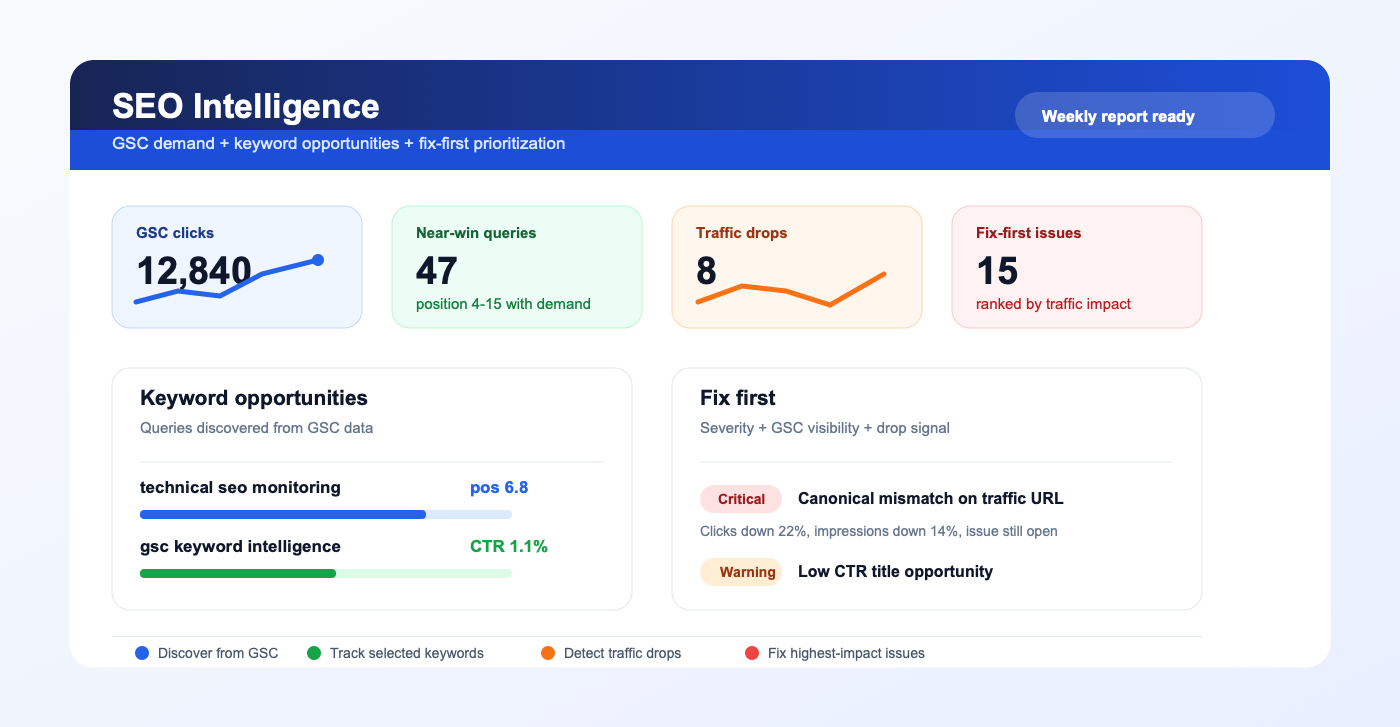

SEO Intelligence is the decision layer above Google Search Console, keyword tracking, and issue monitoring. It looks at recent GSC data, compares it with the previous period, finds keyword opportunities, and ranks open technical issues by likely traffic impact. Instead of treating every warning equally, the workflow asks a more useful question: which issue can affect visibility, clicks, or revenue first?

- GSC query data shows which searches already have impressions and rankings.

- Keyword opportunities highlight near-win queries and low-CTR results.

- Traffic drops compare the current 28-day window with the previous 28 days.

- Issue prioritization ranks open SEO problems by severity, GSC visibility, and recent drop signals.

- Alerts and reports turn the same signals into scheduled follow-up for the team.

The workflow: from query data to paid SEO decisions

The practical value is not that you can see more data. The value is that you can sell, prioritize, or execute the next SEO action with evidence. A page with a missing title is bad. A page with a missing title, 18,000 impressions, position 8.4, and a recent click drop is urgent. That distinction is what turns monitoring into a paid feature.

1. Connect Google Search Console

Connect the site to Google Search Console with a Google account that has access to the property. 2-UA imports daily Search Analytics data and stores per-URL and query-level rows for the site. This data powers site-wide charts, URL-level charts, keyword rank tracking, and the SEO Intelligence view.

If you are setting up a new project, start by importing the top URLs from GSC into tracked URLs. That makes the first monitoring set match real search demand instead of a manually guessed list.

2. Find keyword opportunities

Query-level GSC data is useful because it exposes demand you did not manually choose. SEO Intelligence can surface opportunities such as:

- Near-win keywords - queries ranking around positions 4 to 15 where a small improvement can create meaningful click growth.

- Low-CTR opportunities - queries with impressions and reasonable position, but weak click-through rate.

- Pages with demand but unresolved issues - URLs that already receive impressions and should not be left technically broken.

This is where it overlaps with keyword rank tracking, but it is not the same thing. Keyword Intelligence discovers what is worth tracking. Keyword Rank Tracking monitors the chosen terms over time. The best product flow is: discover an opportunity, click "track this keyword", then receive alerts if it drops.

3. Prioritize issues by traffic impact

Classic SEO audit tools often produce long lists of problems. Long lists are easy to ignore. SEO Intelligence narrows the list by combining issue severity with GSC visibility. The "Fix first" queue favors issues that affect URLs with clicks, impressions, ranking opportunity, or a recent drop.

Example prioritization logic:

- A critical HTTP issue on a URL with traffic should outrank a minor warning on a low-value page.

- A canonical problem on a page that lost impressions should be reviewed before a generic info issue.

- A low-CTR query with high impressions should trigger title and meta description review.

- A position drop on a page with recent content changes should trigger a change-impact investigation.

4. Use plan-based retention as a paid feature

Query history has product value. Short retention is enough for a free user to understand the feature. Longer retention is what teams need for monthly reporting, quarterly SEO reviews, seasonality, and client work. That makes retention a natural paid-plan lever.

- Free - small keyword limit and short history for trying the workflow.

- One site / personal - enough tracked keywords and history for one project.

- Enterprise tiers - larger keyword limits and longer retention as site count and team needs grow.

- Giga tiers - high keyword capacity and multi-year retention for agencies and large properties.

The important part is that deletion is scheduled by plan. When a site owner downgrades or expires, old query rows should no longer stay forever. That keeps storage predictable and makes historical SEO intelligence a paid reason to upgrade.

5. Turn the same data into reports and alerts

The next monetizable layer is a scheduled report or alert. A weekly digest can include traffic drops, new keyword opportunities, fixed issues, new critical issues, and the top "fix first" recommendations. Agencies can turn that into client communication. In-house teams can turn it into sprint planning.

- Traffic drop alert - when clicks or impressions fall for an important URL.

- Keyword opportunity alert - when a query enters near-win range.

- Issue impact alert - when a new issue appears on a URL with search visibility.

- Weekly SEO Intelligence report - one prioritized summary for the site owner or client.

FAQ: SEO Intelligence

How to use it?

Connect Google Search Console for the site, import or add the URLs you want to monitor, then open the site's SEO Intelligence page. Start with the "Fix first" list, because it combines technical severity with GSC visibility. Then review keyword opportunities, choose the queries worth monitoring, and add them to keyword rank tracking. Use the weekly report or alerts to keep the workflow running without manually checking every table.

Is SEO Intelligence a replacement for Google Search Console?

No. GSC remains the source of truth for search performance. SEO Intelligence uses GSC data together with 2-UA monitoring data so you can connect rankings, traffic drops, and technical issues in one decision workflow.

How is this different from keyword rank tracking?

Keyword rank tracking monitors keywords you already selected. SEO Intelligence helps discover which keywords and URLs deserve attention based on real GSC impressions, CTR, average position, traffic drops, and open issues. They work best together: intelligence discovers, rank tracking monitors.

Why does retention depend on the plan?

Query-level GSC history becomes more valuable over time. Free users can validate the feature with a short history, while paid teams need longer retention for reporting, seasonality, and client proof. Scheduled cleanup keeps data storage aligned with the current plan.

What should I fix first?

Start with issues that are critical, affect URLs with clicks or impressions, and coincide with a recent traffic drop. After that, review near-win keywords and low-CTR opportunities, because those often lead to fast title, snippet, internal-link, or content-refresh tasks.

Can this become a lead magnet?

Yes. A free "SEO opportunity report from GSC" can show a limited number of near-win keywords, traffic drops, and fix-first issues. The paid version can unlock more keywords per site, longer retention, advanced alerts, and scheduled reports.

For setup context, read the Google Search Console integration guide and the Top 100 URL import guide.

Stop losing SEO performance to silent changes

If this workflow matches your current SEO bottleneck, do not postpone implementation. Teams usually lose the most traffic between detection and action, not between action and resolution. Start monitoring today and create your first baseline in under an hour.

Execution blueprint for seo intelligence gsc keyword prioritization

Long-form SEO implementation fails when teams try to “fix everything” at once. The sustainable approach is to define a narrow execution lane, prove measurable movement, and scale based on validated impact. For prioritization workflows, this usually means setting explicit ownership, reporting cadence, and escalation thresholds.

A useful way to operationalize this is to split work into three layers: detection, validation, and rollout. Detection finds anomalies quickly. Validation confirms whether the anomaly is material or incidental. Rollout converts validated findings into engineering and content tasks with deadlines. If one layer is missing, the process becomes either noisy or slow.

90-day rollout plan

Days 1-14: baseline and instrumentation

- Define the monitored scope: templates, critical URLs, and ownership groups.

- Set expected behavior for status codes, redirects, and indexation-relevant rules.

- Enable alerts in your team channel and set an initial noise-control policy.

- Run the first full crawl and preserve it as a technical baseline snapshot.

- Document the current known issues so future alerts can be triaged faster.

Days 15-45: controlled improvement

- Move from URL-level fixes to issue-family fixes (template/system level).

- Review trends weekly for response time, quality checks, and crawl findings.

- Introduce tag-based segmentation if your team supports multiple page clusters.

- Track fix validation in re-crawls and keep a short evidence log for each change.

- Escalate only high-impact regressions to engineering to avoid context switching overload.

Days 46-90: scale and commercialization

- Standardize recurring reports for stakeholders and client-facing communication.

- Harden your alert policy with quieter thresholds and clear severity levels.

- Expand monitoring from critical templates to full coverage where justified.

- Turn recurring findings into preventive engineering tasks, not one-off tickets.

- Connect technical trend movement to revenue-adjacent metrics for executive buy-in.

Measurement model: what to track weekly

You should define a compact KPI stack that reflects both technical quality and operational speed. Over-measuring creates reporting overhead and weakens decision quality. A practical KPI model for this topic includes:

- Detection speed: time from change occurrence to first alert.

- Triage speed: time from alert to issue classification and owner assignment.

- Resolution speed: time from assignment to verified fix.

- Regression rate: how often a fixed issue class returns within 30 days.

- Coverage quality: share of critical pages included in active monitoring.

- Business relevance: proportion of high-impact issues in total issue volume.

For mature teams, the strongest KPI is not total issue count but high-impact issue recurrence. When recurrence falls, process quality is improving.

Stakeholder alignment framework

Technical SEO execution usually fails at the handoff boundary. SEO specialists detect issues, but engineering sees isolated tasks without business context. Fix this by sending implementation-ready summaries:

- What changed (objective signal, not interpretation).

- Where it changed (template, segment, or specific URL class).

- Why it matters (indexation, visibility, trust, conversion risk).

- What to do next (single recommended action with acceptance criteria).

- How to verify (which re-check confirms the fix).

If your company runs weekly planning, summarize this in one page before sprint grooming. If you run continuous delivery, post a compact incident card into Slack or ticketing with direct links.

Common failure patterns and how to avoid them

- Too much scope: teams monitor everything and fix nothing. Start with critical assets.

- No baseline: every alert feels urgent without a reference snapshot.

- Tool-only mindset: dashboards do not create outcomes without process ownership.

- One-channel reporting: executives and implementers need different output layers.

- No post-fix validation: “done” without re-check creates hidden regressions.

Operational checklist you can reuse

- Confirm scope and ownership for monitored entities.

- Establish expected behavior and escalation policy.

- Launch baseline checks and preserve initial state.

- Run weekly issue-family review with implementation owners.

- Validate completed fixes with scheduled re-checks.

- Report only high-signal movements to leadership.

- Iterate thresholds every 2-4 weeks based on false-positive rate.

Commercial impact: turning technical work into revenue protection

Teams buy monitoring platforms when they can prove one thing: technical signals reduce preventable loss and shorten recovery time. In practice, you can demonstrate this by documenting incidents prevented, recovery cycles reduced, and implementation throughput improved.

This is where aggressive execution beats passive auditing: instead of producing occasional reports, you build an operating system for technical SEO quality. Once that system is in place, scaling to more URLs, more sites, and more stakeholders becomes predictable.

Advanced FAQ for seo intelligence gsc keyword prioritization

How much historical data is enough for reliable decisions?

For most SEO teams, 4 to 8 weeks of consistent monitoring is enough to separate random fluctuation from structural movement. If your release velocity is high, use shorter review cycles but keep a rolling 8-week reference window. The key is consistency: gaps in monitoring reduce interpretability more than imperfect metrics.

Should we optimize for issue count reduction or impact reduction?

Always optimize for impact reduction. Lower issue count can be misleading if high-severity classes remain unresolved. In mature workflows, teams track high-impact recurrence, time-to-resolution, and incident spread by template class.

What is the best cadence for reporting this topic to leadership?

Weekly operational review plus a monthly executive summary works best. Weekly reports should focus on changes, actions, and blockers. Monthly reports should focus on trend direction, prevented incidents, and business-risk reduction. This two-layer model avoids both over-reporting and under-reporting.

How do we keep collaboration smooth with engineering teams?

Convert every finding into an implementation-ready task: define affected scope, expected behavior, acceptance criteria, and verification method. Engineering teams respond faster when tasks are deterministic. Avoid sending raw issue exports without business context.

When should we escalate from soft monitoring to stricter controls?

Escalate when any of the following is true: critical template regressions appear repeatedly, recovery time is increasing, or ownership is unclear across incidents. At that point, tighten alert policy, enforce scope ownership, and add stricter verification gates after releases.

How do we evaluate ROI for this workflow?

ROI appears in three layers: lower incident duration, fewer recurring regressions, and improved implementation confidence across teams. For stakeholder communication, quantify prevented loss events and reduced recovery effort rather than raw technical counts. This framing translates technical monitoring into business language that supports budget decisions.